Learn to Use Copilot in Microsoft Fabric

Level: Beginner

Learn how to start using Copilot capabilities in Microsoft Fabric and how Copilot works in Microsoft Fabric workloads. This tutorial guides you through various scenarios where Microsoft Copilot excels, from code and query generation to data analysis and visualization.

Additionally you learn how various personas such as Data Engineers, Data Scientists, Data Analysts, and BI Engineers/Users can use Copilot capabilities to accelerate their day to day tasks, by using Copilot in Microsoft Fabric workloads.

By the end of this tutorial, you'll have a solid foundation in place to understand how Microsoft Copilot can enhance your productivity across various tasks.

Exercises in this tutorial include:

- Creating Intelligent data transformation using Copilot for Data Factory

- Accelerating data engineering tasks using Copilot for Data Engineering

- Creating analytical models using Copilot for Data Science

Experiences for Copilot in Microsoft Fabric

- Copilot for Data Factory

- Copilot for Data Engineering and Data Science

- Copilot for Data Warehouse

- Copilot for Real-Time Intelligence

- Copilot for Power BI

Copilot for Data Factory

Hello! And welcome to the Copilot for Data Factory Exercise!

In this exercise, you learn how to use Copilot for Data Factory to create new transformations for existing queries, provide summaries of existing queries, create new queries that reference existing ones, generate new data, and create complex queries to slice and dice data.

You also learn how to write effective prompts to better utilize Copilot's capabilities. Below we also include a step-by-step video to guide you visually through the exercise.

To complete this exercise, you need to enable copilot in Microsoft Fabric.

You also need to download the required assets for this exercise from this link.

Scenario: A data engineer is looking to load and transform sales and customer data into a Lakehouse. The engineer needs to:

- Understands already existing queries.

- Create transformations for existing queries to add new columns to aid in further analysis.

- Clean data to remove empty values.

- Create a new query with a list of all dates the year 2012 to slice and dice data.

The following video shows the overall process you go through this exercise:

Let's get started!

Using Copilot for Data Factory

Objective: Understand how to use Copilot for Data Factory to create new transformations for existing queries, provide summaries of existing queries, create new queries that reference existing ones, generate new data, and create complex queries to slice and dice data.

Perform the following steps:

Using the experiences switcher at the bottom left of the Microsoft Fabric workspace, switch to the Data Factory experience. In the Data Factory experience, create a new Dataflow Gen2 item

Upload the required data by selecting Get Data, and choose Text/CSV option then upload the *Human Resources, Sales, Person, Customer, Purchasing, and Products csv files.

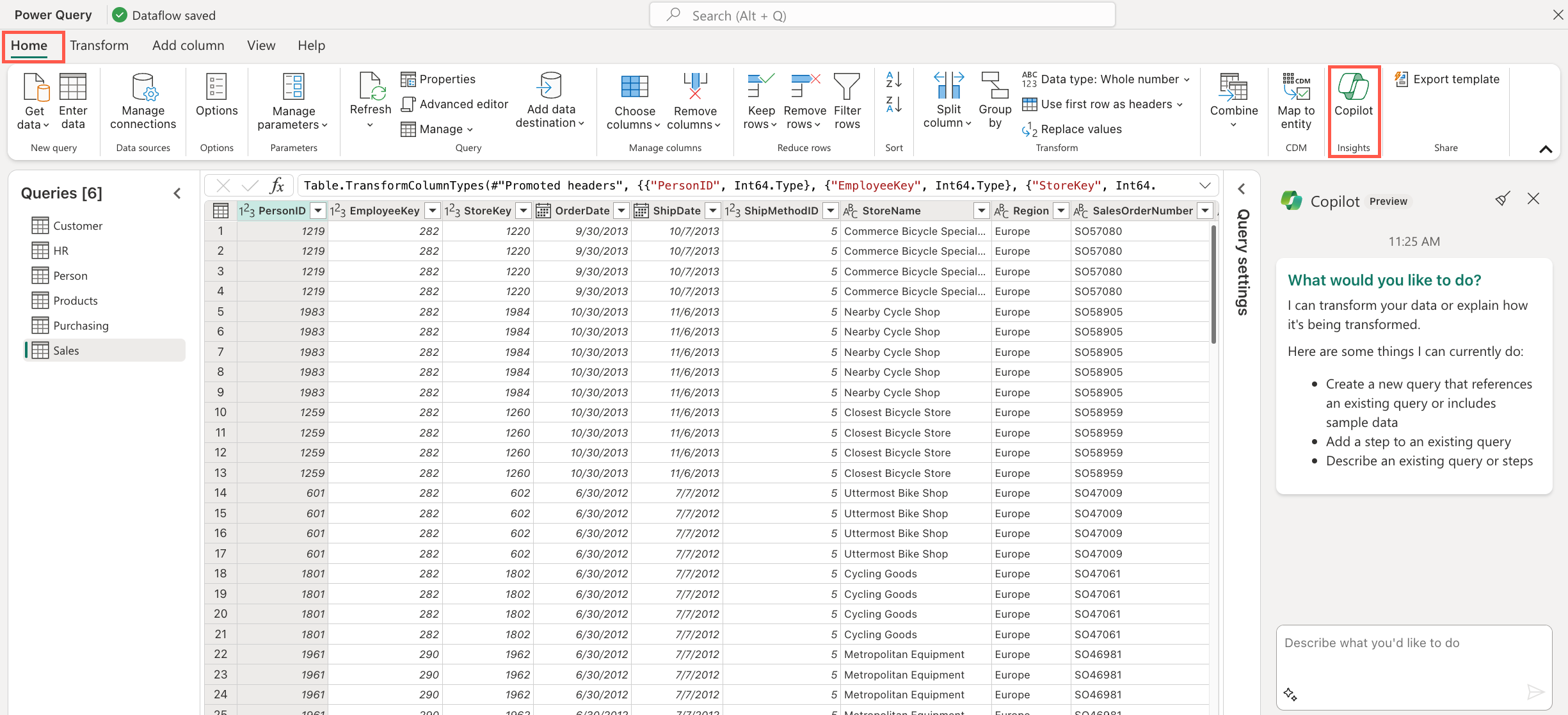

Your data is loaded into your dataflow as queries. Next, from the home tab Select the Copilot button. This opens the Copilot panel on the right side of the screen, from here you see that Copilot suggests prompts and also provides you with the ability to enter your own prompts.

Now, we need to begin performing some data transformations. but before we begin, we need to understand some of the existing queries. We use Copilot to provide summaries of these queries. Select the

Salesquery, then from the bottom left if the Copilot panel, select the starter prompt icon and then the Describe this query option. Submit the prompt and review the description provided by Copilot. You can repeat this process for the other queries.Note

Ensure that the query you want Copilot to assist/respond to is selected before submitting the prompt.

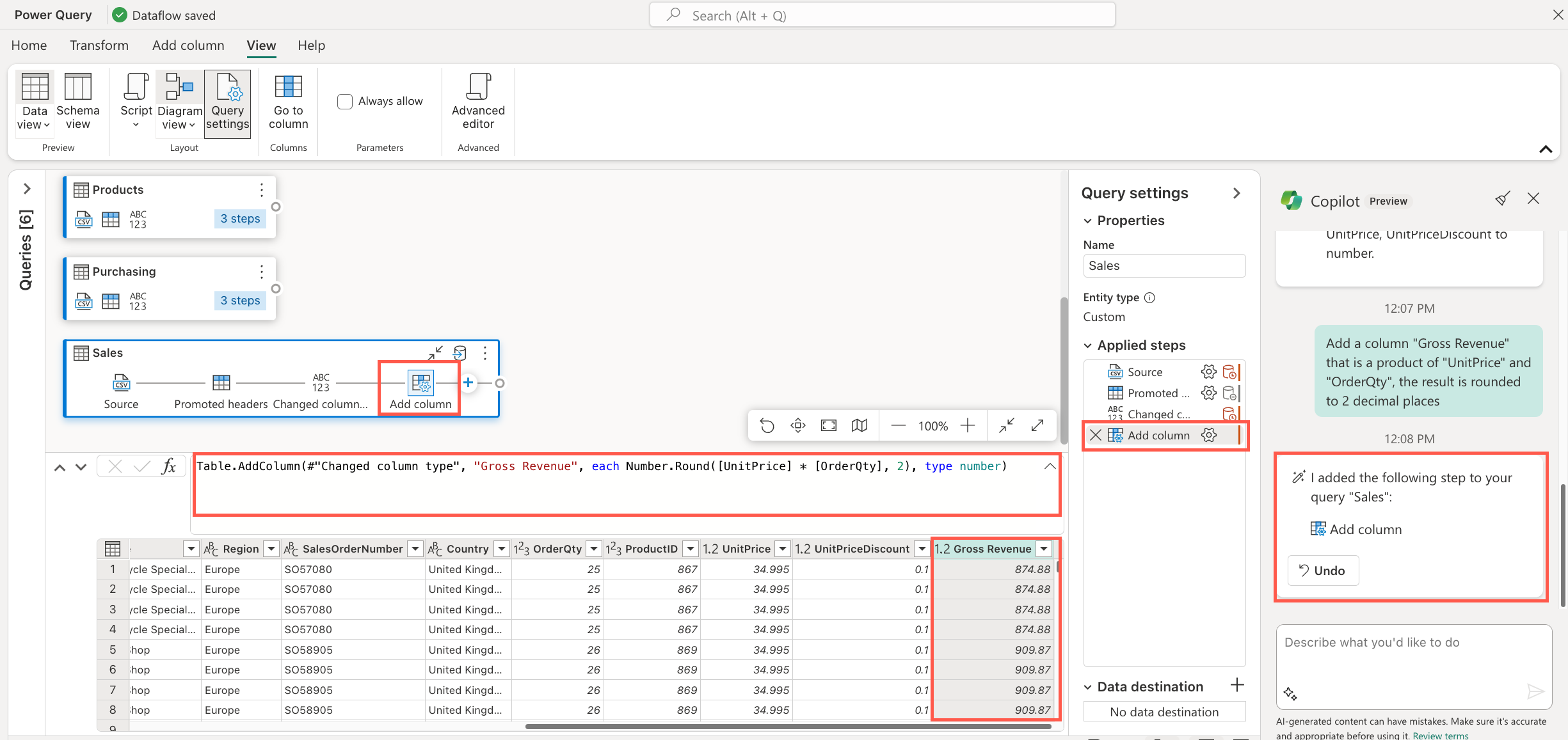

Now that we have an understanding of the data we're working with; we can now begin working on the various transformations. First, you need to enable your team to get the revenue generated by the sales so they can build better models and visualizations later. To achieve this, select

Salesquery and enter the following prompt into the text box in the Copilot panel:'Add a column 'Gross Revenue' that is a product of 'UnitPrice' and 'OrderQty', the result is rounded to two decimal places.

Submit the text. Copilot returns a response card with a brief summary of the changes made and also adds a new column to the query. Take a moment to review the changes made.

Notice the response card also provide you with an

undobutton to allow you to revert the changes made if needed.You need to get monetary value of the discount amount given to each sale. To achieve this, submit the following prompt:

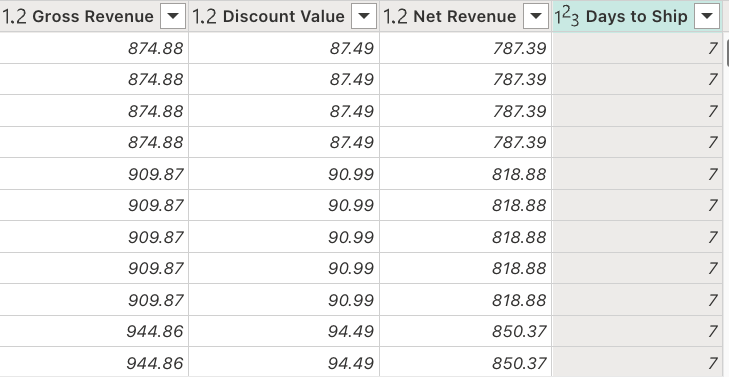

Add a column 'Discount Value' that is a product of 'Gross Revenue' and 'UnitPriceDiscount', the result is rounded to two decimal places.

Notice, that after Copilot responds to this prompt with the response card and associated changes to the query, the

Undobutton in the previous response card is no longer available. This is because Copilot only allows you to undo the last change it made.For better analysis, you need to have another column that will get the value of the generated revenue while taking into account the discount value. Can you use Copilot to add a new column that will get the difference between Gross Revenue and Discount Value columns and round the result to two decimal places?

You also need to understand the effect the number of shipping days have on the sales and revenue generated. To achieve this, use Copilot to add a new custom column to get the difference between OrderDate and ShipDate columns.

As a result of the transformations made in the previous steps the

Salesquery should now have these other columns

Next rename the following queries manually:

- Customer to DimCustomer

- Purchasing to DimShipping

- Products to DimProducts

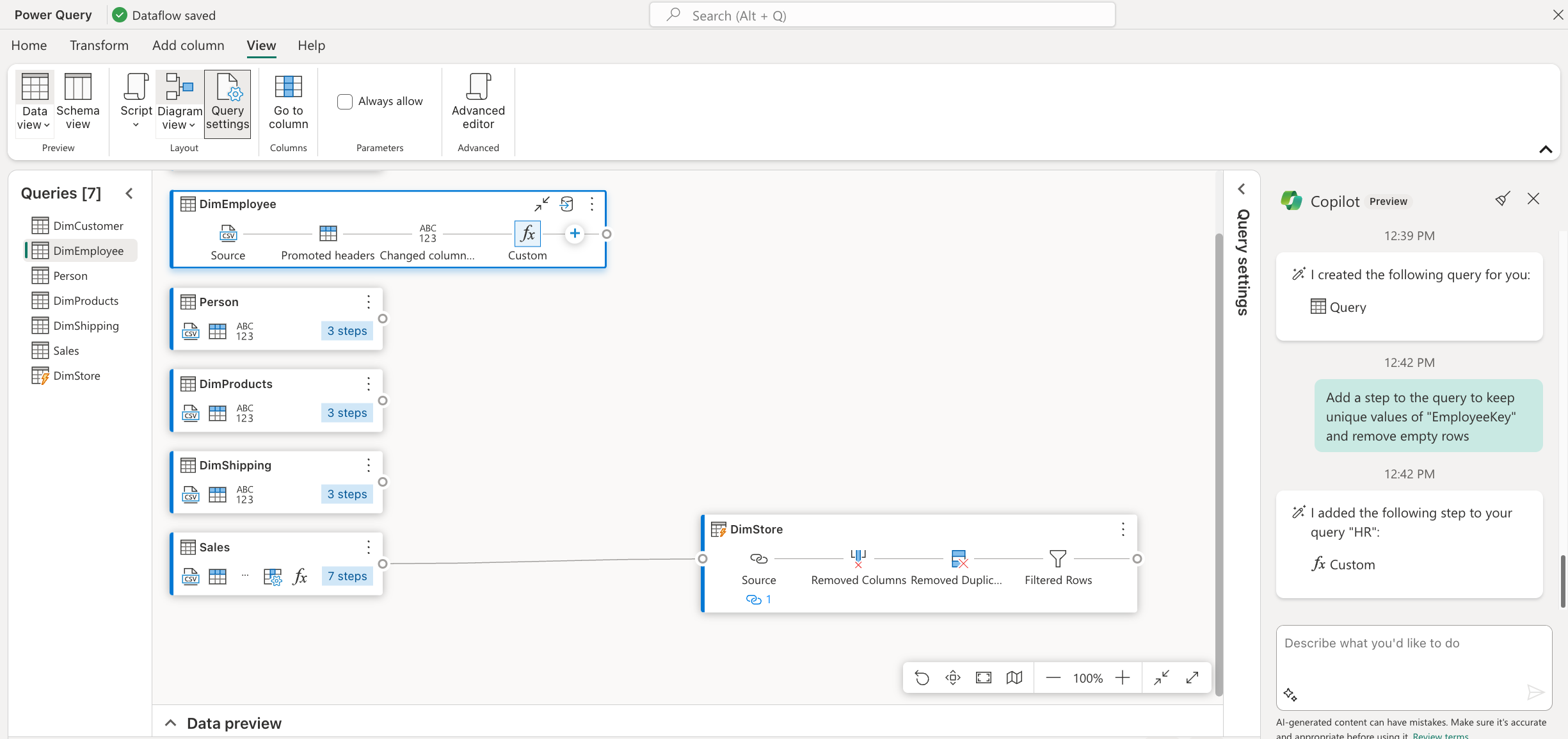

Next, you need to create a dimension table that allows you to analyze each store based on sales. To achieve this, select the

Salesquery and submit the following prompt:Create a new query and select only 'StoreKey' and 'StoreName' columns and keep unique values. And remove empty values

Copilot creates a new query, named

query. Rename this query toDimStore.Next, you need to have a dimension table that allows you to store employee information for use in your visualizations. To achieve this, select the

Human Resourcesquery and submit the following prompt then rename theHuman Resourcesquery toDimEmployeeAdd a step to the query to keep unique values of 'EmployeeKey' and remove empty rows

Once the above two steps are completed, you should have the following queries in your dataflow:

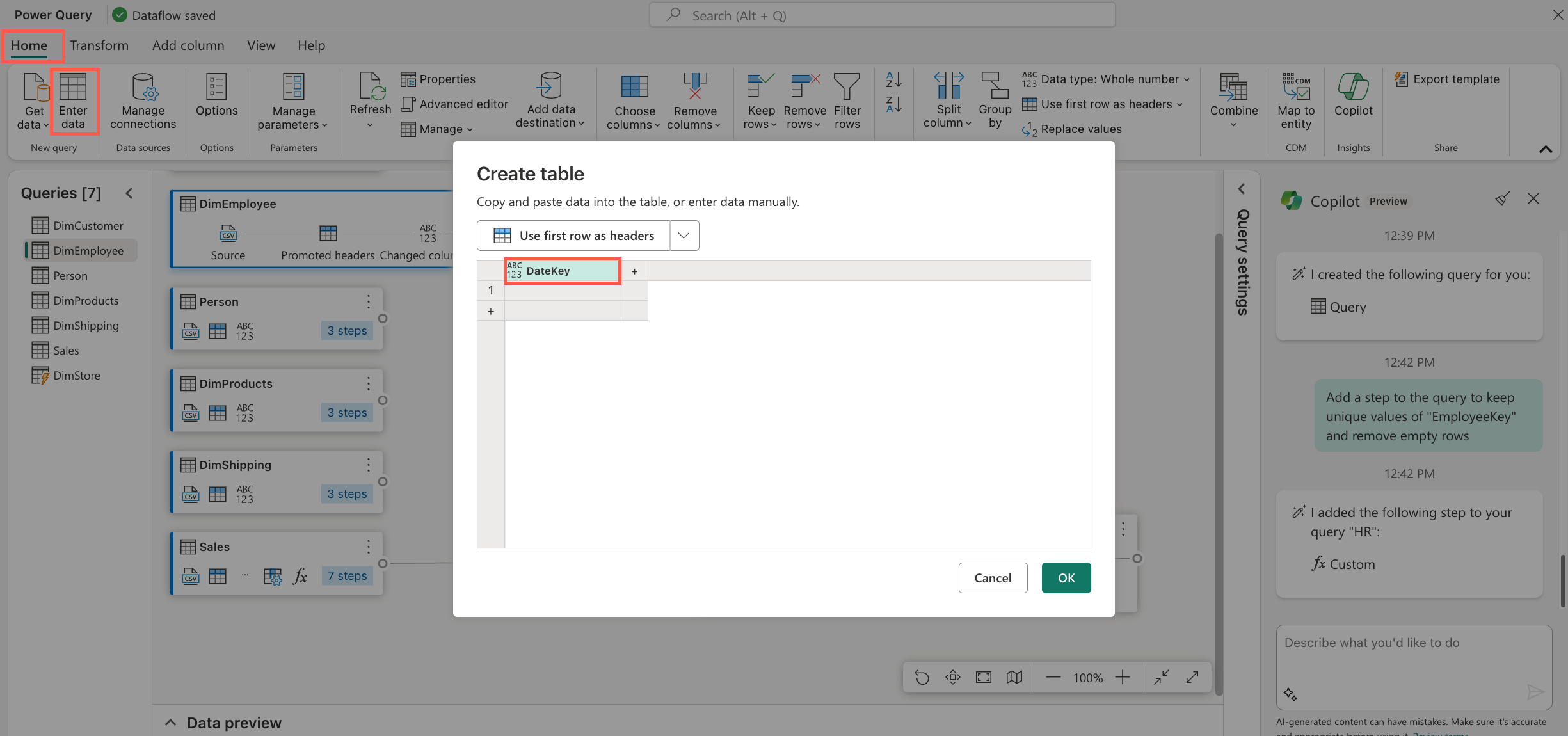

Next you need a dimension table that allows you to slice your data based to see the sales performance over a Quarter, Year, and Fiscal Year. To achieve this, manually add a new query by selecting Enter Data from the home tab and name the first column

DateKey.

Once the query is created, submit the following prompt for Copilot to generate data for the DateKey column

Add a step to the query to add date values to the "DateKey" column starting from 1/1/2012 to 12/31/2013

Change the type of the DateKey column to

Date.Now, you need to split the date values into Days, Month, MonthName, and Year for better analysis. You can achieve this with the following prompt:

Add new columns "Day", "Month" and "Year"

Next, you need to be able to identify the start of the Fiscal Year for better analysis when looking at sales performance. To achieve this, use the following prompt:

Add 1 to 'Year' if the 'Date' month is greater than or equal to 7 and keep as is if the 'Date' month is less than or equal to 6. Store the result in a new column 'Fiscal Year' and take the last two digits of the result combine them with the prefix 'FY'

Lastly, you also want to see the sales performance based on each quarter of the fiscal year. You need to have a Quarter column within the table. To achieve this, use the following prompt:

Add a column 'Quarter' with values as 'Q1' when the 'Month' is equal to 7 or 8 or 9, 'Q2' when the 'Month' is equal to 10 or 11 or 12, 'Q3' when the 'Month' is equal to 1 or 2 or 3, 'Q4' when the 'Month' is equal to 4 or 5 or 6

Rename the query to

DimDate. At this point the DimDate query should look like this:

Finally add a Lakehouse as the destination for all the queries in the dataflow and then publish the dataflow.

Congratulations!

And that's it! You completed the tutorial and learned how to use Copilot for Data Factory to create new transformations for existing queries, provide summaries of existing queries, create new queries that reference existing ones, generate new data, and create complex queries to slice and dice data.

Remember, you're using Copilot, not Autopilot. These tools are there to be your assistant in your day-to-day work rather than doing work for you.

What were your thoughts on these exercises? What would you like to see more of? Let us know in the comments of the embedded video.

Copilot for Data Engineering & Data Science

Welcome to the Copilot for Data Engineering & Data Science exercise in Microsoft Fabric!

This exercise is the second in the Copilot in Microsoft Fabric tutorial. In this exercise you learn how Copilot can be used in the notebooks for the Data Engineering and Data Science workloads to generate code snippets, provide explanation for existing code, suggest data visualizations, suggest analytical machine learning models, and more.

You also learn how to write effective prompts to better utilize Copilot's capabilities in diverse scenarios. A step-by-step video is also included to visually guide you visually through the exercise.

As in the previous exercise, to complete this exercise, you need to enable Copilot in Microsoft Fabric and ensure you have the required assets for this exercise. If you haven't done so, you can download the required assets from this link.

You also need the following tables loaded in your Lakehouse from the previous exercise:

- DimCustomer

- DimProducts

- Sales

- DimShipping

- DimStore

- DimDate

- DimEmployee

- Person

Scenario: A data engineer / data scientist is looking to analyze the demographics of the customers. The engineer needs to:

- Understand the existing code that loads and transforms the customer data.

- Transform the data to remove empty values and add new columns to aid in further analysis.

- Visualize the customer data by various demographics.

- Create a machine learning model to predict the likelihood of a customer to purchase a bike to help the marketing team build a targeted campaign.

The following video shows the overall process you go through this exercise:

Let's get started!

Copilot for Data Engineering & Data Science

Objective: Understand how to use Copilot in notebooks for Data Engineering and Data Science workloads to generate code snippets, provide explanation for existing code, suggest data visualizations, suggest analytical machine learning models, and more.

To complete this exercise, perform the following steps:

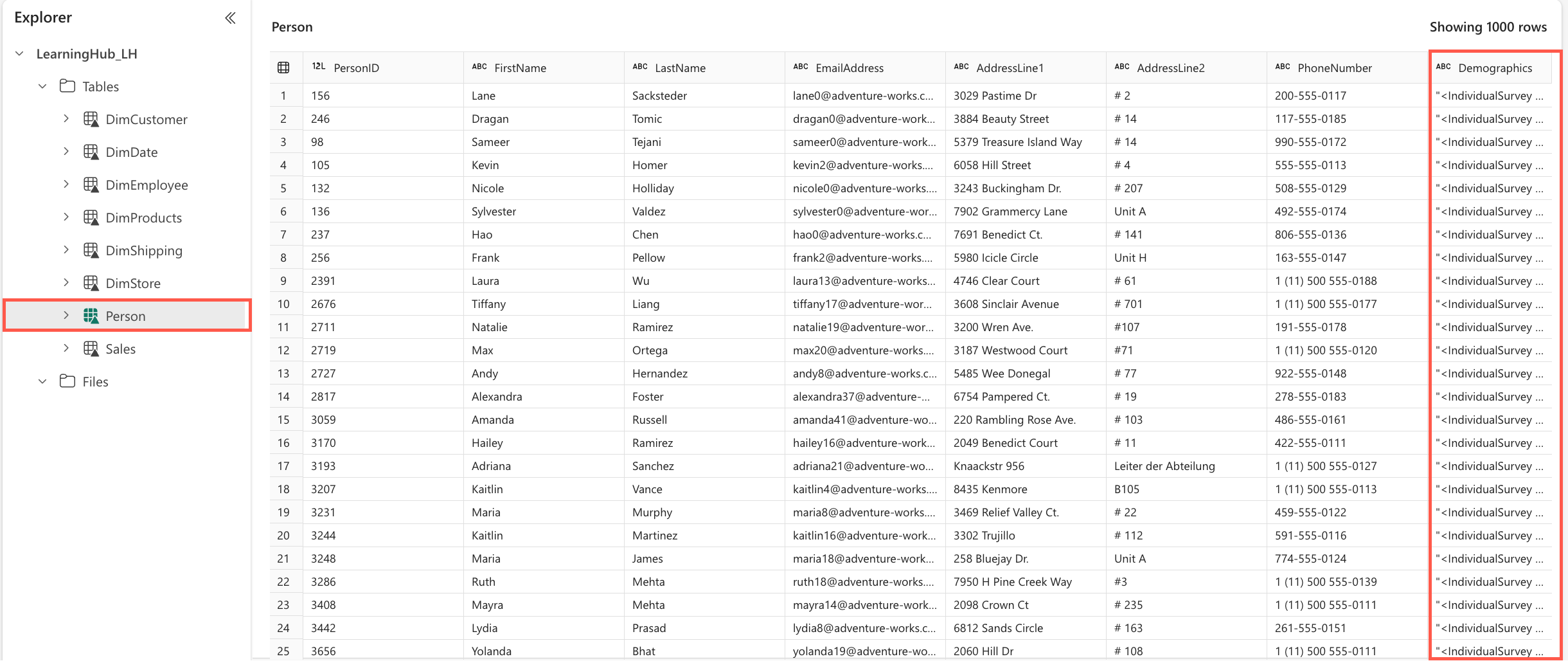

Open the Lakehouse in which data from the Data Factory in the previous exercise is loaded. Inside the Tables folder, open the

Persontable.

This opens the

Persontable. The last col umn, namedDemographics, contains the demographics for each person as XML data. In the next steps, we'll transform this data to extract it into its own table and build an analytical model.From the assets you downloaded, you will use the *[load_demographics.ipynb] notebook in this exercise.

Using the experiences switcher at the bottom of the Fabric workspace, switch to the Data Engineering experience. From here, select the Import notebook item. On the panel that opens, select the Upload button and upload the load_demographics.ipynb file.

From the left navigation bar, select your workspace and open the load_demographics notebook. Choose Lakehouses as your data source from the explorer and select Add. Select the Existing Lakehouse option and select Add again. Pick the Lakehouse where your data is stored and select Add to finalize. The explorer displays the Lakehouse with all tables from the previous exercise.

The notebook we loaded contains some existing code, we use Copilot to help us understand this existing code and the DataFrames in the code before beginning any other transformation tasks. Before proceeding in the first cell, ensure to rename the Lakehouse in the Select statement to match the name of your Lakehouse.

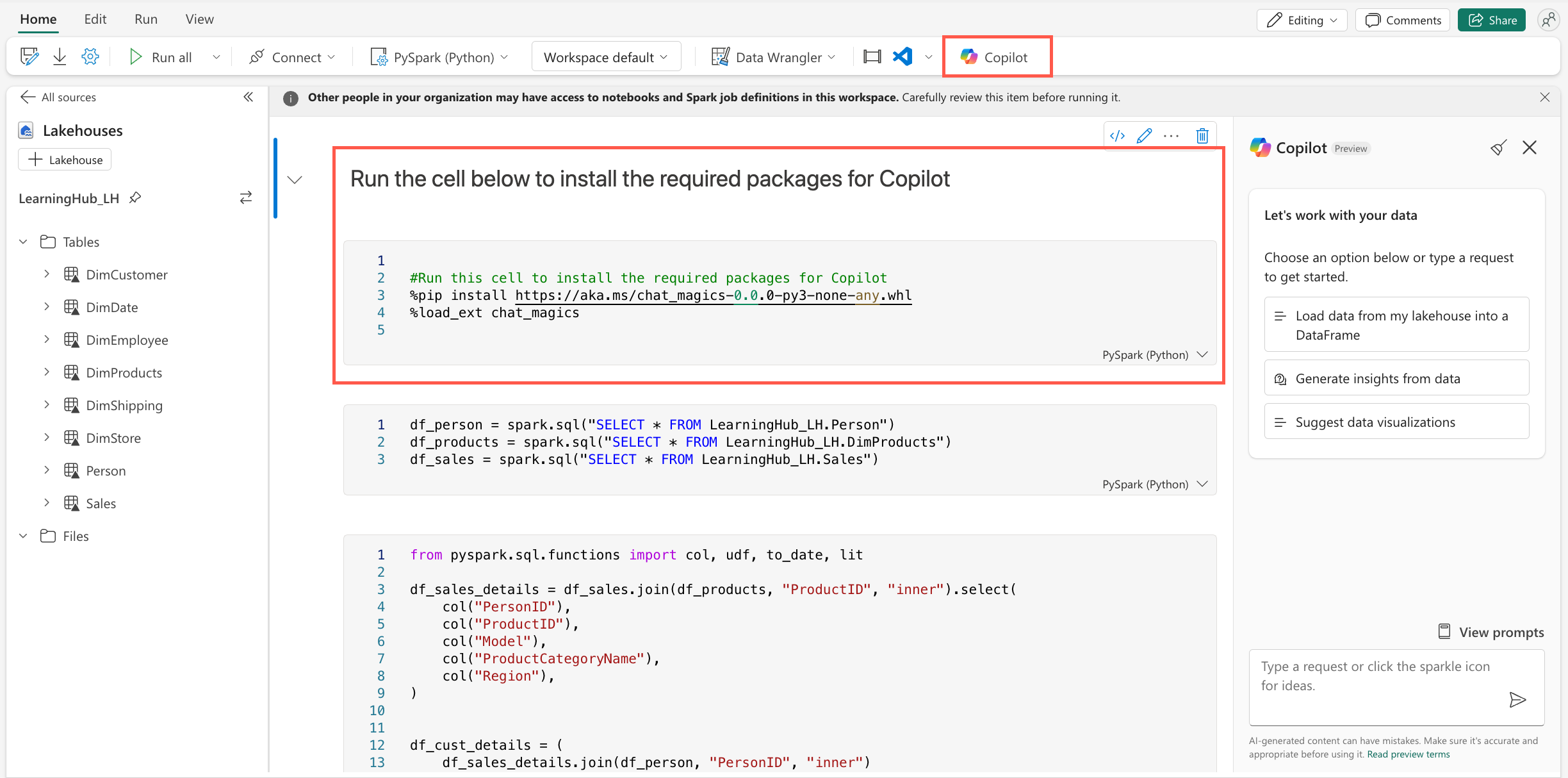

Add Copilot capabilities by selecting the Copilot button from the menu ribbon. This opens the Copilot panel on the right side of the screen.

Select the Get started button in the Copilot panel. This adds a cell at the start of the notebook, with code that adds the required packages to use Copilot in the notebook.

Run the code cell to add the required packages to the notebook. Once this completes, take time to review the output; This includes as section on Data Privacy & Security and Chat_Magics. We'll be using the Chat_Magics in the next steps.

Run the next cell that loads the data from the

Person,DimProducts, andSalestables into DataFrame.Add the following chat-magics command at the beginning of the next two cells, and run both cells.

%%chat

Explain this block of code step by step

For the first cell, Copilot explains how we're performing various joins and renaming of columns. In the output of the second cell, Copilot explains how we're parsing the XML data and transforming it to a structured DataFrame. Do you notice Copilot mentions, the use of a UDF? What is a UDF?

On the open Copilot pane on the right, submit the following prompt:

What is a UDF?

Before proceeding any further, let's add some comments in these cells to improve readability. Remove the chat_magics for chat in both cells and replace with the following chat_magics:

%%add_comments

Run the cells to add the comments.

Note

Ensure that you review the comments added to the cells to ensure they are accurate and that the code was not altered in any way.

Run both cells.

Use Copilot to understand the DataFrame

df_cust_detailscreated in the steps above. Submit the following prompt:%describe

df_cust_details

Confirm the columns that Copilot describes in the output exist in the DataFrame by running the following line of code in a new cell:

display(df_cust_details)

You notice that the

TotalPurchaseYTDhas all empty values, we need to drop it. Add a new cell and run the following prompt:%%code

Remove the TotalPurchaseYTD column

Copilot generates the code to remove the column, review the code and run the cell to remove the column.

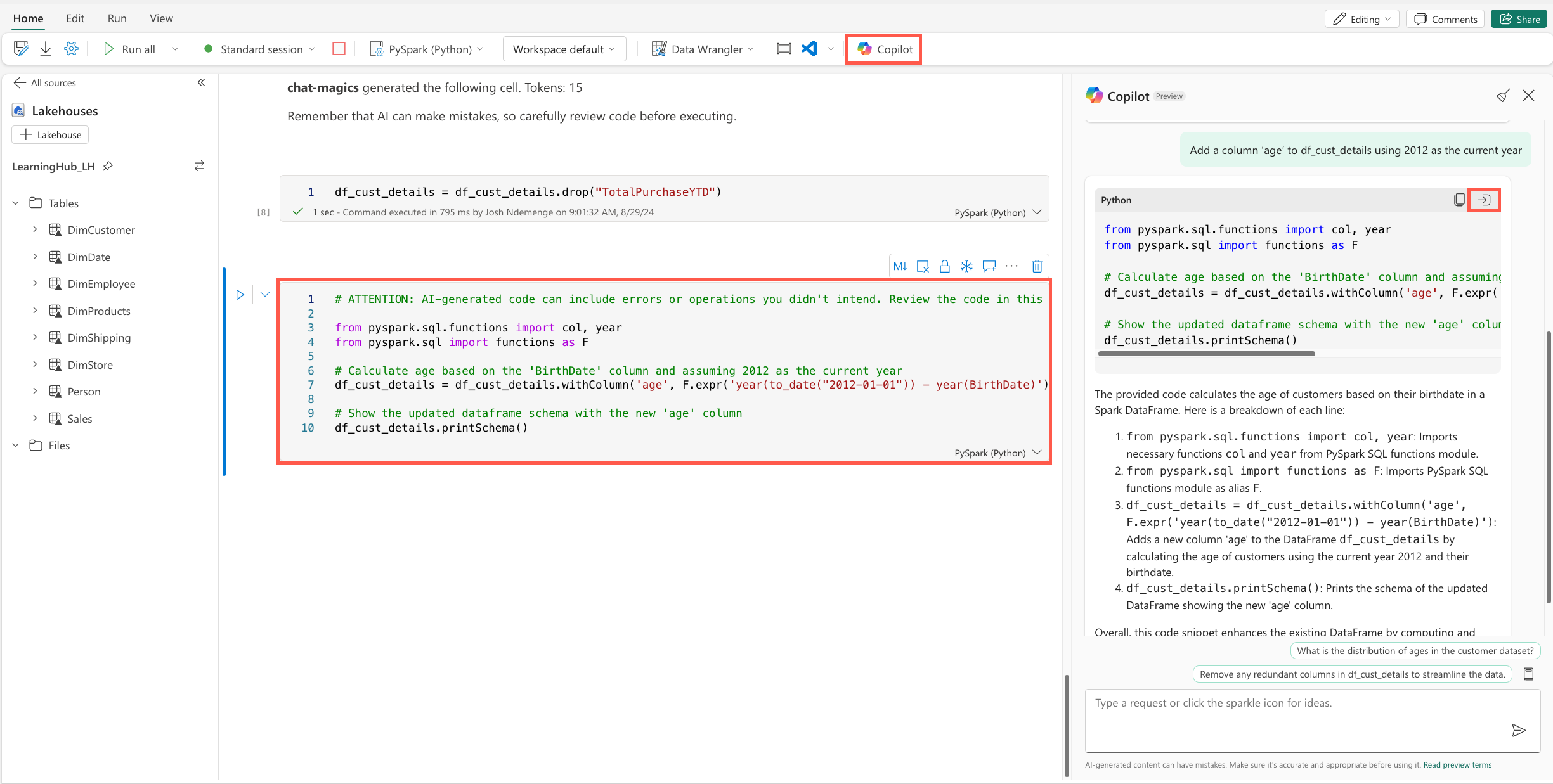

Add a new column called Age, Use the Copilot panel and submit the following prompt to get the code to add the column:

Add a column ‘age’ to df_cust_details using 2012 as the current year

Take time to review the code generated and explanation provided by Copilot, then use the insert code button on the response card to add the code to a new cell and run the cell.

Since we want to build an analytical model to predict the likelihood of a customer to purchase a bike, we need to transform the data to add a new column that indicates if a customer has purchased a bike or not. Use the Copilot panel to submit the following prompt:

Add a new column ‘IsBikeBuyer’ with a value of 1 for rows where ‘ProductCategory’ is ‘Bikes’, and 0 otherwise.

Review the code generated by Copilot and the explanation provided, then use the insert code button on the response card to add the code to a new cell. If the code generated creates a new DataFrame for the filtered data rename the DataFrame back to

df_cust_details. Run the cell.Using the Copilot panel, select the prompt guide icon and then the Suggest data visualizations option. In the prompt text box, replace

[YOUR_DATA_NAME]withdf_cust_detailsand submit the prompt.Pick one of the suggested visualizations and use either the Copilot panel or Chat_Magics to generate code for the visualization.

Take time and try out the other options provided by Copilot in the prompt guide.

Use the Chat_Magics to save the DataFrame to a table in the Lakehouse.

%%code

save the df_cust_details to a new table the Lakehouse

Review the code generated by Copilot, and rename the table to a more suitable name if needed. Run the cell to save the DataFrame to a new table in the Lakehouse.

Refresh the Data Sources explorer to see the new table created in the Lakehouse.

And finally, how can we use Copilot to help the marketing team build a targeted campaign by predicting the likelihood of a customer to purchase a bike? Using the Copilot panel to submit the following prompt:

Suggest how we can build a predictive machine learning model using df_cust_details to predict if a customer is likely to buy a bike or not to help Adventure Works, the bike shop, build a targeted marketing campaign, the ‘IsBikeBuyer’ column is the target column.

Carefully review the code generated by Copilot and the explanation provided. Use the insert code button on the response card to add the code to a new cell and run the cell.

Congratulations!

And that's it! You've successfully completed the Copilot for Data Engineering & Data Science exercise in Microsoft Fabric. You learnt how to use Copilot in notebooks to generate code snippets, provide explanation for existing code, suggest data visualizations, suggest analytical machine learning models, and more.

Remember, you're using Copilot, not Autopilot. These tools are there to be your assistant in your day-to-day work rather than doing work for you.

What were your thoughts on these exercises? What would you like to see more of? Let us know in the comments of the embedded video.

Congratulations!

You've completed this tutorial

Congratulations on completing the tutorial! You've now learned how to Copilot in Microsoft Fabric across the various workloads.

Have an issue with this section? If so, please give us some feedback so we can improve this section.