Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

This article provides details about how data you provide is processed, used, and stored when you deploy models from the Model Catalog. Also see the Microsoft Products and Services Data Protection Addendum, which governs data processing by Azure services.

What data is processed for models deployed in Azure Machine Learning?

When you deploy models in Azure Machine Learning, the service processes the following types of data to provide the service:

Prompts and generated content. You submit prompts, and the model generates content (output) through the operations it supports. Prompts might include content that you add through retrieval-augmented generation (RAG), metaprompts, or other functionality included in an application.

Uploaded data. For models that support fine-tuning, you can upload your data to the Azure Machine Learning Datastore for use in fine-tuning.

Generate inferencing outputs with managed compute

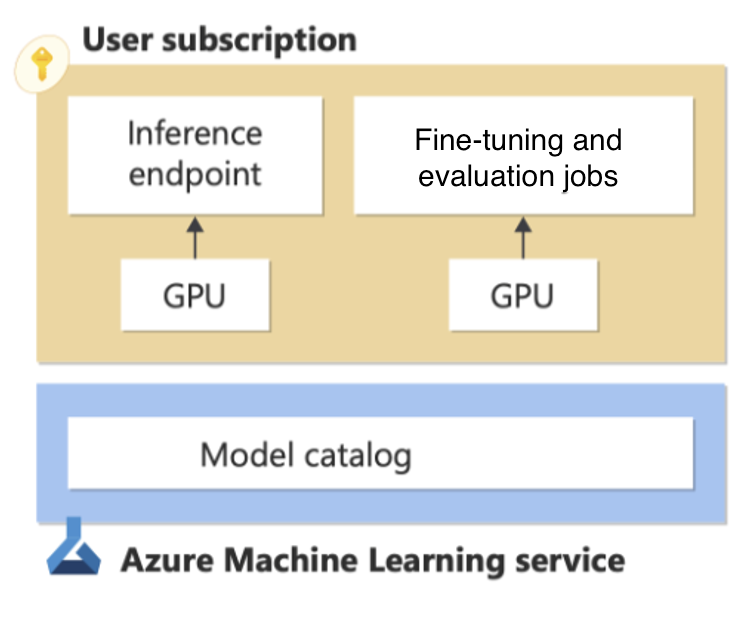

When you deploy models to managed compute, you deploy model weights to dedicated virtual machines and expose a REST API for real-time inference. To learn more, see deploying models from the Model Catalog to managed compute. You manage the infrastructure for these managed computes, and Azure's data, privacy, and security commitments apply. To learn more, see Azure compliance offerings applicable to Azure Machine Learning.

Although containers for "Models Sold Directly by Azure" are scanned for vulnerabilities that could exfiltrate data, not all models available through the model catalog are scanned. To reduce the risk of data exfiltration, you can protect your deployment by using virtual networks. To learn more, see how to network isolation model catalog. You can also use Azure Policy to regulate the models that your users can deploy.

Generate inferencing outputs with standard deployments

When you deploy a model from the model catalog (base or fine-tuned) as a standard deployment for inferencing, you get an API that gives you access to the model hosted and managed by the Azure Machine Learning Service. For more information, see Models-as-a-Service. The model processes your input prompts and generates outputs based on the functionality of the model, as described in the model details provided for the model. While the model is provided by the model provider, and your use of the model (and the model provider's accountability for the model and its outputs) is subject to the license terms provided with the model, Microsoft provides and manages the hosting infrastructure and API endpoint. The models hosted in Models-as-a-Service are subject to Azure's data, privacy, and security commitments. For more information about Azure compliance offerings applicable to Azure Machine Learning, see here.

Important

This feature is currently in public preview. This preview version is provided without a service-level agreement, and we don't recommend it for production workloads. Certain features might not be supported or might have constrained capabilities.

For more information, see Supplemental Terms of Use for Microsoft Azure Previews.

Microsoft acts as the data processor for prompts and outputs sent to and generated by a model deployed for standard deployment. Microsoft doesn't share these prompts and outputs with the model provider, and Microsoft doesn't use these prompts and outputs to train or improve Microsoft's, the model provider's, or any third party's models. Models are stateless and no prompts or outputs are stored in the model. If content filtering (preview) is enabled, the Azure AI Content Safety service screens prompts and outputs for certain categories of harmful content in real time. For more information, see how Azure AI Content Safety processes data here. Prompts and outputs are processed within the geography specified during deployment but might be processed between regions within the geography for operational purposes (including performance and capacity management).

As explained during the deployment process for Models-as-a-Service, Microsoft might share customer contact information and transaction details (including usage volume associated with the offering) with the model publisher so that they can contact customers regarding the model. For more information about information available to model publishers, see follow this link.

Fine-tune a model with standard deployments (Models-as-a-Service)

If a model available for standard deployment supports fine-tuning, you can upload data to (or designate data already in) an Azure Machine Learning Datastore to fine-tune the model. You can then create a standard deployment for the fine-tuned model. You can't download the fine-tuned model, but the fine-tuned model:

Is available exclusively for your use;

Can be double encrypted at rest (by default with Microsoft's AES-256 encryption and optionally with a customer managed key).

Can be deleted by you at any time.

Training data uploaded for fine-tuning isn't used to train, retrain, or improve any Microsoft or third party model except as directed by you within the service.

Data processing for downloaded models

If you download a model from the model catalog, you choose where to deploy the model, and you're responsible for how data is processed when you use the model.